|

File sharing between host and container (docker run -d -p -v).Docker Networks - Bridge Driver Network.More on docker run command (docker run -it, docker run -rm, etc.).Docker image and container via docker commands (search, pull, run, ps, restart, attach, and rm).Working with Docker images : brief introduction.Nginx image - share/copy files, Dockerfile.volume="/var/run/docker.sock:/var/run/docker.sock:ro" \ĭ/beats/filebeat:7.6.2 filebeat -e -strict.perms=false \ volume="/var/lib/docker/containers:/var/lib/docker/containers:ro" \ volume="$(pwd)/:/usr/share/filebeat/filebeat.yml:ro" \

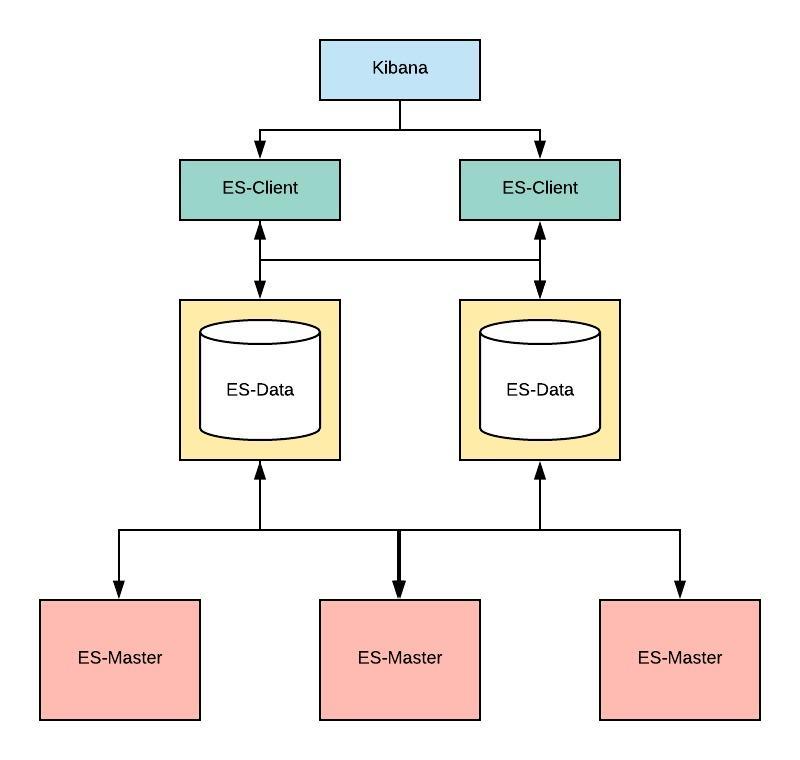

With docker run, the volume mount can be specified like this: We'll configure Filebeat on Docker by providing the via a volume mount. To test this works, we may want to restart the other containers ("elasticsearch" and "kibana"): The conventional approach is to provide a configuration file via a volume mount, but it's also possible to create a custom image with our configuration included.ĭownload an example configuration file as a starting point: The Docker image provides several methods for configuring Filebeat. Loaded machine learning job configurations Setting up ML using setup -machine-learning is going to be removed in 8.0.0. Loading dashboards (Kibana must be running and reachable) $ docker run -net=mynetwork -name filebeat /beats/filebeat:7.6.2 setup -E =kibana:5601 -E = Running Filebeat with the setup command will create the index pattern and load visualizations, dashboards, and machine learning jobs. $ docker run -p 9200:9200 -p 9300:9300 -net=mynetwork -name elasticsearch -e "discovery.type=single-node" /elasticsearch/elasticsearch:7.6.2 $ docker network create mynetwork -driver bridge We need to make sure that the "elasticsearch" and "kibana" containers are running with dokcer network (here, "mynetwork"): Each harvester reads a single log for new content and sends the new log data to libbeat, which aggregates the events and sends the aggregated data to the output that we've configured for Filebeat.įor the latest updates on working with Elastic stack and Filebeat, skip this and please check Docker - ELK 7.6 : Logstash on Centos 7.Īs discussed earlier, the filebeat can directly ship logs to elasticsearch bypassing optional Logstash.

For each log that Filebeat locates, Filebeat starts a harvester. When we start Filebeat, it starts one or more inputs that look in the locations we've specified for log data. However, adding context to the log messages by parsing them up into separate fields, filtering out unwanted bits of data and enriching others - cannot be handled without Logstash. Since Filebeat ships data in JSON format, Elasticsearch should be able to parse the timestamp and message fields without too much hassle. We can ship logs on hosts via Filebeat directly into Elasticsearch. Filebeat (log files), and the other members of the Beats family (Packetbeat: network metrics), Metricbeat: server metrics), acts as a lightweight agent deployed on the edge host, pumping data into Logstash for aggregation, filtering and enrichment. That's why we will almost always need to use Filebeat and Logstashin tandem. However, it cannot, in most cases, turn our logs into easy-to-analyze structured log messages using filters for log enhancements. Installed as an agent on our servers, Filebeat monitors the log files or locations that we specify, collects log events, and forwards them either to Elasticsearch or Logstash for indexing.įilebeat is one of the best log file shippers out there today - it’s lightweight, supports SSL and TLS encryption, supports back pressure with a good built-in recovery mechanism (it records the last successful line indexed in the registry, so in case of network issues or interruptions in transmissions, remembers where it left off when re-establishing a connection). Filebeat is a lightweight shipper for forwarding and centralizing log data.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed